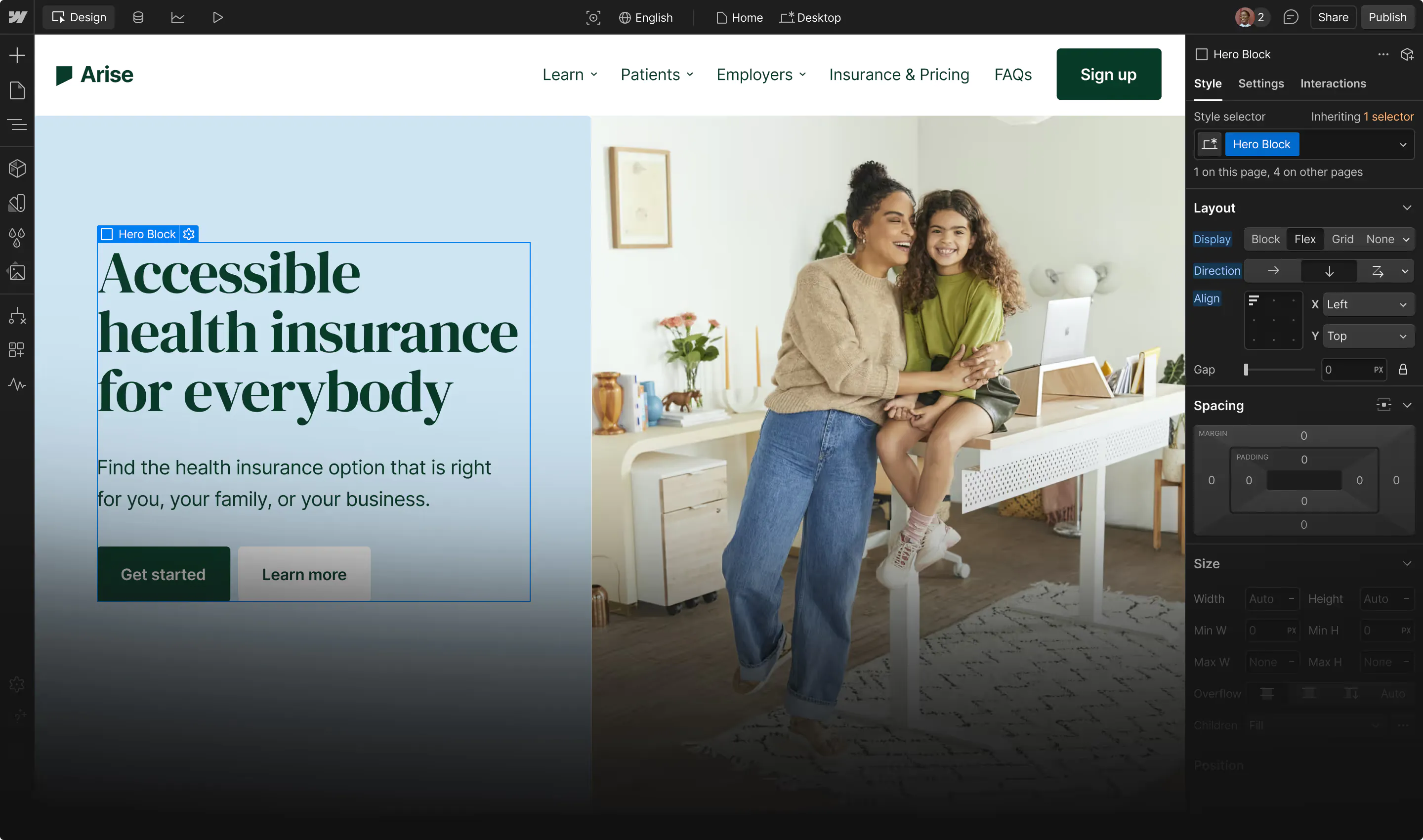

Codeflow, the local development environment we use for engineering interviews at Webflow, is available on Github. It replaces browser-based coding platforms with something closer to how engineers actually work: your machine, your IDE, your tools.

Why we built it

Browser-based interview editors introduce noise that has nothing to do with engineering ability. Unfamiliar keybindings, latency, no access to your terminal or extensions. Candidates spend mental energy fighting the tool instead of solving the problem. We wanted to remove that variable entirely.

We also wanted interviews where AI usage is visible and evaluable. When candidates work locally with screen sharing, we see the full workflow: how they context-switch between code, terminal, and an LLM, how they formulate prompts, how they integrate or discard the output. That's a much richer signal than "did they get the right answer" and makes our interviews match the way our engineers actually spend their days.

How it works

Codeflow is a React shell. Clone the repo, install dependencies, run the dev server.

git clone https://github.com/webflow/codeflow.git

cd codeflow

npm install

npm start

Codeflow itself contains no interview-specific source code. At the start of the interview, the candidate receives a zip file, drops it into src/interviews/, and the exercise loads into the running app.

From there, the candidate is working in a real codebase. Multiple files. Existing features. Component hierarchies, state management, and intentional rough edges. The kind of thing you'd encounter in your first week at any product company shipping at scale.

Designing exercises that reflect real work

The shell is intentionally generic. The interview exercises you plug into it are where the design thinking lives, and we think the structure of an exercise matters more than the specific problem being asked.

Traditional interview questions tend to isolate skills: implement a data structure, solve a constrained algorithm. These can still be useful in narrow contexts, but they don’t reflect how engineering work actually shows up, especially in an environment where AI can handle much of the mechanical execution.

A few principles guide how we think about exercise design:

Start with an existing system, not a blank slate

Most engineering work isn’t greenfield. It involves understanding code that already exists, figuring out where it breaks down, and making changes without introducing new issues. Exercises should reflect that: multiple files, established patterns, some intentional rough edges.

Layer problems instead of isolating them

Work compounds. Fixing one issue often reveals another. Adding a feature can introduce performance or state complexity. Exercises that build in layers, understanding, extending and refining make it easier to see how a candidate’s decisions carry forward.

Evaluate across different dimensions of engineering

Strong engineering isn’t just about correctness. It involves balancing tradeoffs, thinking about the user, and anticipating edge cases. We design exercises that surface:

- Core fundamentals (does it work, and why)

- Product and UX thinking (does it behave well under real usage)

- System design instincts (how does this scale or break)

Make tool usage visible

In practice, engineers work with tools, including AI. Rather than restricting that, exercises can be structured so usage is observable. Working in a local environment with screen sharing means we see how prompts are formed, how outputs are evaluated, and how suggestions are integrated or discarded. That interaction often reveals more than the output itself.

Remove friction that doesn’t matter

Unfamiliar editors, missing tooling, or artificial constraints introduce noise. If it doesn’t tell us something about how a candidate thinks, it shouldn’t be in the interview. Allowing candidates to use their own setup produces more representative work.

The specific exercises we run at Webflow follow these patterns — layered tasks, real-world constraints, and open-ended paths — but the underlying structure is what matters most. Whatever problems you design for your team, these principles should hold.

AI is part of the workflow

We don't restrict tooling. Copilot, Claude, ChatGPT, grep through docs or whatever you'd use on the job. The interview is screen-shared, so we see everything in real time. That's the point.

What we're evaluating is the gap between what the model gives you and what you ship.

A candidate debugging a component that re-renders on every parent state update doesn't paste the file into a chat window and ask it to "optimize." They isolate the problem, then ask something specific: compare useMemo on the derived value vs. lifting state to a shared context, given frequent parent updates and the app's existing data flow. They read the response critically, take one part, discard another, and combine it with what they already know about the codebase. When we ask why they chose that path, they can explain it without looking at the chat history.

Vague prompts, pasting suggestions without reading them, inability to explain what changed. That's where AI becomes a crutch instead of a multiplier, and it's obvious almost immediately.

What we're assessing

Classic interview problems had a good run, but they've become a test of preparation rather than engineering. And with AI in the mix, they test even less. We needed interviews that select for the work: navigating a large-scale codebase, debugging performance issues across rendering layers, refactoring systems under real constraints, and balancing speed with sustainability.

With seniority, the bar shifts. At more senior levels, we expect candidates to ask clarifying questions before writing code, identify risks the exercise doesn't spell out, and make architectural choices that account for constraints beyond the immediate task.

Why we're open-sourcing it

Every engineering team we talk to is working through a similar question: how do you interview for a job where AI is part of the toolkit? Most are still figuring it out. We've been running Codeflow in production interviews for a while now, and it's changed what signal we can get from candidates. We wanted to share what's working.

Codeflow is on GitHub. Use it, fork it, take the parts that are useful. The shell is deliberately minimal so you can plug in whatever exercises make sense for your stack and your team.

If you're using Codeflow or building something similar, we'd genuinely like to hear how you've designed your process. The more teams share what's working, the faster we all get to interviews that actually measure what matters.

And if you're an engineer who already works this way, we're hiring.

→ Codeflow on GitHub → Open roles at Webflow

Join our team

Ready to help shape the future of the web? Let’s build it together.

.jpg)